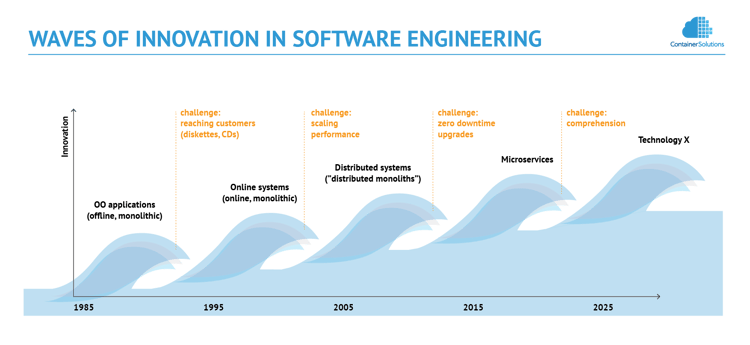

While microservices are a relatively new concept, industry leaders have been using the same principles - namely high granularity of design, isolation, and automation - for years. And what they share suggests that soon we are about to face a technological crisis. It was well expressed by Adrian Cockcroft (a former cloud architect of Netflix), who demonstrated how hard it is to understand a system built of thousands of densely interconnected microservices that exist only for seconds or less (just enough to serve a single request). All our monitoring tools and visualization techniques turn useless, unable to deal with processes that are so dynamic. The crisis could be given a name - the crisis of comprehension.

A crisis like this is a frequent phenomenon, as it occurs every time a technology reaches its limits. Fortunately, it also gives birth to a new technology, which is free of the most painful limitations of its predecessor. This is the nature of (Kondratiev) wave patterns that are known to exist behind the occurrences of innovations (see the following diagram). (For those interested in the economic side of this, have a read of the opening chapters of Paul Mason's Postcapitalism, where both long and short waves, and their accompanying crises, are discussed.)

So despite microservices just starting to get attention, let us try to think what kind of technology is going to replace them. In this post, I will try to describe its features based on technological trends that can be observed today.

Software Systems

As we currently experience the popularity of the DevOps movement, it is interesting to note that at its essence, there is a slowly emerging realisation that complex software systems can be modelled more efficiently by using programming techniques than methods used traditionally in the past, which were based on concepts familiar to IT Operations (load balancers, subnets, storage options etc.), but not native to developers. Terms that are recently gaining attention like Programmable Infrastructure or Immutable Infrastructure clearly indicate that the change is taking place.

The reason why is that software systems and software applications follow the same rules, which eventually leads to the observation that software systems are applications, but surprisingly, not like applications as we know today.

To explain the difference it is worth taking a look at today’s applications and software systems, and how they deal with stability. For applications stability is desirable, but not as important as functionality, which is the reason why techniques like dynamic software updating never became popular.

For software systems the situation is reversed. High availability is more important than systems’ functionality, which is the reason why IT Operations are rarely concerned about what a system actually does, as long it is up and running. The difference inspired new implementation strategies. A good example is the Amazon web shop, which seamlessly falls back to serving static content in case dynamic data was not delivered on time. This means that functionality of software systems can be sacrificed in order to ensure business continuity.

This leads to a conclusion that software systems will evolve into applications of a new type. What makes them different is their goal to survive. This is why the reduction of functionality, for example when resources are rare, will be perfectly natural to them, especially if this helps to avoid a severe crash.

Note that this behaviour - namely the adaptation - is exhibited by simplest biological forms.

Humans

The next observation refers to the role of us, humans.

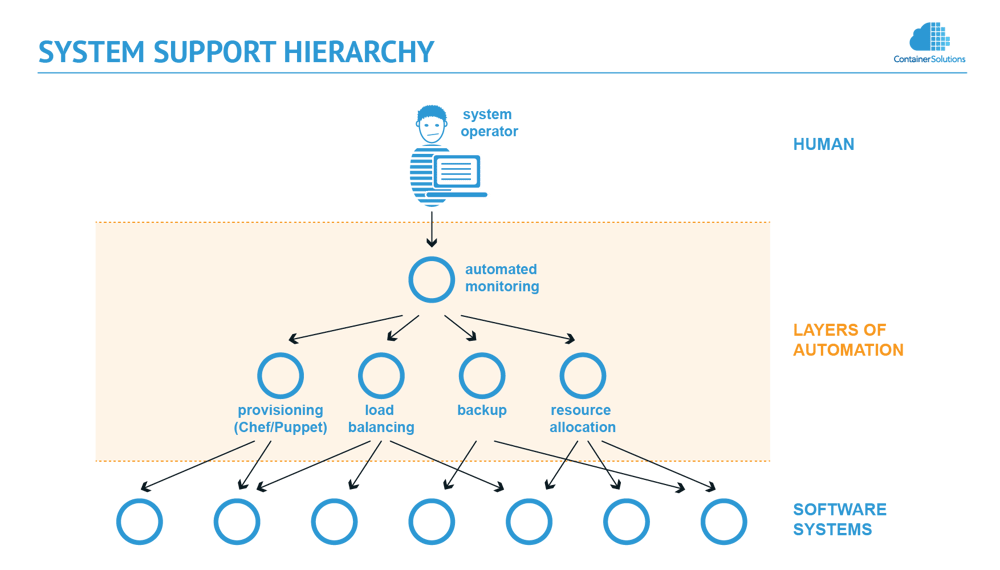

One of the accomplishments of recent years is the automation of operations around system support, like provisioning or backups. This was a natural process as the operations are costly and error prone, if executed manually. But the automation requires constant monitoring, especially if supported systems are critical to business. This, as systems grow bigger, becomes a complex task on its own. So to counterbalance that, we started automating the monitoring as well. Sometimes we took a step further by implementing automated recovery procedures.

But here comes a paradox. Even if all operations would be automated, and we would create an automated monitoring system that heals software systems if they fail, somebody or something needs to monitor the monitoring system. This could be done by yet another monitoring system, but that needs to be monitored as well. So eventually, there is no choice - no matter how many layers of automation applied, at the top there must be a human, as only the authority of this operator can ensure the overall continuity of operations. So paradoxically, no matter how hard we try to automate, with this approach we can never automate fully.

The reasoning reveals a hierarchical structure, where software systems are under our control.

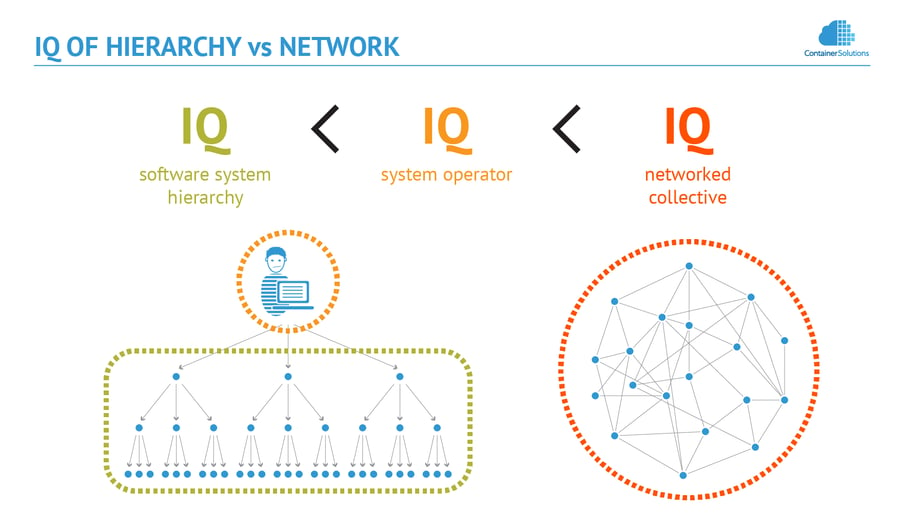

This brings my conclusion; once we realise that there is a hierarchy, we can apply the discoveries of systems theory in the field of engineering, biology, and even psychology. They all point to intrinsic limitation of hierarchical structures.

One of the first researchers, who investigated the subject, was professor Howard H. Pattee, who noticed (van Neumann, 1966 [1])

as a system becomes elaborately hierarchical its behaviour becomes simple

but more insight has been given by professor Yaneer Bar-Yam, a physicist and systems scientist, who stated (Bar-Yam, 1997 [2])

A hierarchy, however, imposes a limitation on the degree of complexity of collective behaviours of the system. (…) The collective actions of the system in which the parts of the system affect other parts of the system must be no more complex than the controller. (...) In summary, the complexity of the collective behaviour must be smaller than the complexity of the controlling individual.

His observation that

Hierarchical control structures are symptomatic of collective behaviour that is no more complex than one individual.

leads to a conclusion that software systems are limited by humans, as systems cannot grow more complex than the ability of the system operator to comprehend. And what Adrian Cockcroft demonstrated suggests that we are approaching fast the limits of our ability to comprehend these systems.

Building The Ecosystem

In order to escape the limit of hierarchical structures, and to overcome the crisis of comprehension, clearly a new strategy is needed. In order to illustrate what it would be, I will use a metaphor.

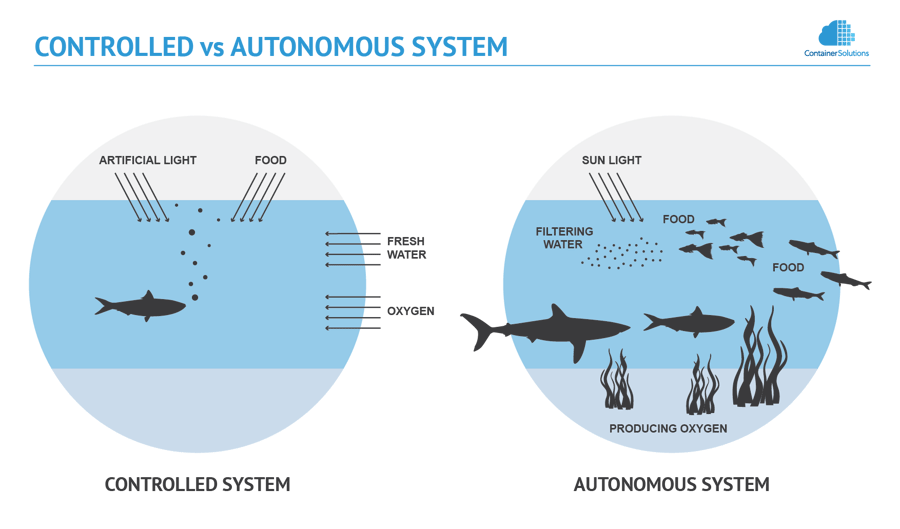

Imagine that software systems are like fish in aquarium. The way we act now resembles an attempt to keep fish alive by adding to the aquarium more and more layers of automation: artificial lighting, oxidizers, filters to refine water, heaters etc. It is time consuming, costly, and in the end still requires attention.

But there is an alternative. Instead of automating, we could plant plants that provide oxygen, introduce small animals that filter water and so on and so on. Then aquarium is no longer an aquarium - it becomes a small scale (artificial) lake or an ocean, where fish can survive for years their own, without any human involvement.

Using more technical terminology, software engineering needs to move away from the hierarchical approach, and invent a technology, which would be able to create an ecosystem of interconnected systems that self-organise, and therefore effectively satisfy their mutual needs without the need for human support. The collective intelligence of complex systems like that would not be limited by our comprehension.

Self-organisation is exactly the quality that defines Artificial Life (AL), which naturally directs us to the nature as a source of inspiration to how to build complex systems (Cotsaftis, 2007 [3]):

Natural complex systems are structurally more robust than complicated ones as evidenced by observation of living organisms, the most complex existing systems. Similarly, artificial complicated systems can be made more robust by transforming them into complex ones when linking adequately and strongly enough some of their components.

Good understanding of biological analogies allows us to define the main features of the technology in more detail. Most likely these will be:

- systems will be able to replace 100% of their code without any downtime (main goal is survival)

- new (reactive) programming techniques would be used to express them (software systems are applications)

- the applications will be executed in the context of a new operating system, which will be a successor of today's cloud computing (the ocean)

- the applications will consist of fine grained services (cells), which will be relatively simple as their implementation will cover mainly upgrading and scaling (growing and budding in biological terms)

- the basic needs of the applications (computing power, memory) will be satisfied by the operating system (water and oxygen in a biological context)

- self-healing capabilities will be built-in into the structure of applications (at cellular level), and supported by operating system

Building a self-organizing ecosystem of this sort is certainly a complex task, however, basic technologies needed for that are already emerging in various disciplines of software engineering. The work had already begun.

Summary

The way to overcome limits of our comprehension is the creation of a series of new technologies that will allow software systems to self-organize. As a consequence technology will move away from the traditional model of supervising control to systems that operate based on our intention (Cotsaftis, 2007 [3]):

a new step is now under way to give man made systems more efficiency and autonomy by delegating more “intelligence” to them (...) This new step implies the introduction of “intention” into the system and not to stay as before at simple action level of following prescribed fixed trajectory dictated by classical control.

Microservices take the first step in that direction. The next wave of technological evolution will bring first solutions, where human controlling involvement is not necessary, because software systems collectively exhibit a primitive form of Artificial Intelligence (“Small AI”), similar to microbial intelligence in the biological realm. (Behaviour emerges from the interactions.)

If this reasoning is correct, the transition to these new technologies will deeply change software engineering. Some of changes have been described in the past. In the next posts I will present some concepts and techniques, which would be necessary for designing the new technologies.

References

[1] ^John von Neumann, Theory of Self-Reproducing Automata (1966)

[2] ^Yaneer Bar-Yam, Complexity rising: From human beings to human civilization, a complexity profile, Encyclopedia of Life Support Systems (EOLSS UNESCO Publishers, Oxford, UK, 2002); also NECSI Report 1997-12-01 (1997).

[3] ^Michel Cotsaftis, What Makes a System Complex? an Approach to Self-Organization and Emergence (2007)

Previous article

Previous article