The .NET experience with microservices (.NET Core/Docker/Kubernetes/WeaveNet/Azure)

A few of our recent posts featured WeaveWorks’ Sock Shop demo application - an educational project that demonstrates how to build a complex microservice application that not only looks good, but also does something useful.

The educational value of the application lies in its architecture. It is unusual to see so many technologies in one place: databases (mysql, mongo etc), programming languages (golang, java, node), all combined together to achieve a single goal and also being able to run on multiple deployment platforms. Heterogenous architectures like this is where the power of microservices shine. Now with just one click you can build a setup which in the past required a few weeks of hard work.

This post showcases the .NET platform, a new player in the Docker ecosystem. I will show you how to build a .NET component and how to integrate it with the rest of the micro-services.

It’s worth mentioning that .NET is new in container world for two reasons.

First, it was recently announced that there would be a Windows server version of the Docker engine for running containers natively on Windows. This allows wrapping your existing Windows applications in containers and making them believe that their execution environment never changes, which is the core power of containers.

Secondly, Microsoft only started recently to expand its presence in the Linux ecosystem. Nowadays .NET applications can run on Linux on top of Mono - an open-source implementation of .NET framework (now it is a Microsoft-officially sponsored project) or on top of .NET Core - an open-source version of .NET.

So now, if I would want to dockerize a .NET application, I have the following options to choose from:

- Building a Windows container to be run on Windows Server 2016

- Building a Linux container:

- with Mono as a .NET platform

- or as a Linux ASP.NET application running on .NET Core.

The last option was for me most tempting as the documentation promises that .NET Core is so light that it could be used even for IoT applications. This means that its energy footprint must be very low. It doesn’t come for free though. There is some effort required to port existing .NET applications to the platform, as .NET Core is “a redesigned version of .NET that is based on the simplified version of the class libraries” (http://tirania.org/blog/archive/2014/Nov-12.html), which means that the API is very similar but there are some differences.

The idea behind my experiment was to write from scratch an application, which would have the same functionality as one of the existing microservices in Sock Shop. Such definition did not constrain me in any sense, so I decided to use .NET Core.

So the definition of the project was like this:

- Take the most complex microservice in Sock Shop demo

- Recreate its functionality in .NET Core

- And show how you can swap the two implementations on a running cluster

For me that meant:

- Taking the orders microservice - a Java component that acts as a facade to other microservices.

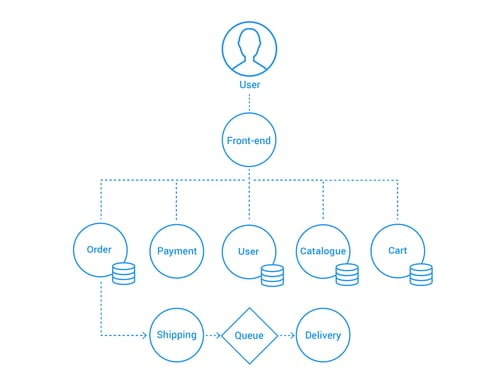

(A more detailed diagram here.) - Rewrite it in .NET in a way that all other microservices won’t notice the difference between talking to Java implementation or the .NET version of the microservice.

- Deploy this all on a Kubernetes cluster with Weave Net on top of the Azure cloud (more on this choice later).

The learning path was steep, but the goal has been achieved. The original and the new .NET implementation produce Docker images that can be used interchangeably

- weaveworksdemos/orders (from https://github.com/microservices-demo/orders)

- weaveworksdemos/orders.net (from https://github.com/microservices-demo/orders.NET)

Here are a few lessons that I learnt on the way.

Choosing the platform

As I wanted to have full the Microsoft experience I decided to try Microsoft Azure cloud. But the choice for an orchestration system was not that obvious.

Sock Shop is provided with deployment configurations that support several microservice platforms: AWS ECS, Kubernetes, Mesos+Marathon (which means that DC/OS is also supported), Swarm, Nomad and others. On the other hand the Container Service on Azure cloud offers only DC/OS and Swarm out-of-the-box.

UPDATE: Microsoft just announced an official support for Kubernetes. Check this out.

So it looked like DC/OS and Swarm are where the two worlds meet, but the difficulty was that Sock Shop needed an overlay network in order to provide each container with its own globally routable IP address. Unfortunately when I tried DC/OS, there was no overlay network I could easily use:

- Calico is supported on DC/OS but not on Azure (GitHub issue)

- Weave Net works on Azure, but is not supported by DC/OS (no Weave Net package in DC/OS universe), so its installation is cumbersome

UPDATE: DC/OS suport on Azure which has been just upgraded to v. 1.8.4 - the version provides out of the box navstar - DC/OS overlay network that uses CNI (Container Network Interface). More information here.

The new version of Docker Swarm released as part of Docker 1.12 provides overlay networking, but does requires some manual steps. This is also the case for setting up Weave Net with Swarm (instructions).

So instead of DC/OS, I decided to use Kubernetes, deployed according to its instructions that pointed me to WeaveWorks repository https://github.com/weaveworks-guides/weave-kubernetes-coreos-azure

What I needed to do was only

git clone https://github.com/weaveworks-guides/weave-kubernetes-coreos-azure

cd weave-kubernetes-coreos-azure

export AZ_VM_SIZE=Medium

./create-kubernetes-cluster.js

and it worked.

(Update: Now you can achieve the same the clean way via Azure Container Service.)

This way Kubernetes with Weave Net on Azure became my platform.

Starting the project

After I installed .NET Core on my machine (go to https://www.microsoft.com/net/core for instructions) I had to start the actual coding.

Luckily the initialization of the project was fairly easy. Microsoft’s guide Building Docker Images for .NET Core Applications pointed me to the Yeoman generator. To create a stub of my new project I had only to run:

yo aspnet

and answer a few questions. After that I had an initial project with the Dockerfile included. It was a nice experience.

Choosing IDE

To feel like a .NET developer I wanted to use Visual Studio, as it supports “Any Developer, Any App, Any Platform” (https://www.visualstudio.com/). Then I realized that my platform (MacOS) is supported by its light version Visual Studio Code IDE. It lacks many functionalities of Visual Studio, which I read about - like the generation of class model based on JSON data (that I needed at some point), but it was still doing its job pretty well. Its strong points are git integration and the ⌘p shortcut which provides easy access to build and test functions.

In general the experience was positive. It’s a nice, light editor.

Coding

Writing the actual code that does something turned out to be quite challenging. There were a few reasons for that.

Dependency management

First of all NuGet the main distribution channel for .NET packages does not have a clear indication, which packages are compatible with .NET Core (GitHub issue). Many times I was adding a dependency to my project.json file

"dependencies": {

...

"MongoRepository": "1.6.11"

..only to discover that after installing

dotnet restore

the package was not compatible with my environment:

Package MongoRepository 1.6.11 is not compatible with netcoreapp1.0 (.NETCoreApp,Version=v1.0). Package MongoRepository 1.6.11 supports:

- net35 (.NETFramework,Version=v3.5)

- net40 (.NETFramework,Version=v4.0)

- net45 (.NETFramework,Version=v4.5)

Enough to say that when I needed a server and client libraries for HAL (Hypertext Application Language), out of the list of 10 C# libraries I could use only two.

In some cases I could install a package, but then it was crashing at run-time. For example, HalKit, which would not work as a package but could be used when imported as source code.. This could indicate problems with compatibility at binary level between .NET Core and NuGet libraries (NuGet distributes packages as dll’s), but I haven’t investigated it further. More details here.

Being unable to use many of existing libraries turned out to be quite costly in the sense of time, as I had to build higher level abstraction on to of low-level libraries (MongoDB driver, Hal client/servel) myself.

Documentation

Related to this is the scarcity of documentation. .NET Core is a relatively new platform, whereas most of the existing .NET documentation refers to libraries, which are simply not compatible with it. So in many cases finding a solution to a problem at hand is hard. Quite often I had to analyze source code in various repositories until I found a solution.

Java-.NET interoperability

Another reason reimplementing the service in .NET was not easy was the various small differences in the interpretation of the HAL protocol between the Spring and .NET libraries. As a result, additional effort was required to produce JSON, which could be interpreted properly by Spring counterparts, or to interpret their output.

This all made the implementation of the Order service quite difficult. I quickly started appreciating the power of Java Spring framework, which you can see if you compare the final implementations of

- Java controller https://github.com/microservices-demo/orders/blob/master/src/main/java/works/weave/socks/orders/controllers/OrdersController.java

- .NET controller

https://github.com/microservices-demo/orders.NET/blob/master/Controllers/OrdersController.cs

The two implementations are functionally equivalent, but the Java controller is clearly much more concise. That’s for instance because Java Spring generates automatically routes to getters (to read the status of shop orders), whereas in .NET I had to write them by hand.

Summary

This little project turned into a more than three week experience, but I am quite happy with the result. Now you can go to Socks Shop main web site (https://microservices-demo.github.io/), choose a platform you wish, and simply replace the name of Docker image in deployment script from weaveworksdemos/orders to weaveworksdemos/orders.net, and you achieve a working microservice application with a .NET component happily answering all the requests. Try it yourself and I hope you enjoy!

Previous article

Previous article