For the past few weeks we’ve been posting a blog series on Cloud Native, which in true tech style has been bunged full of buzzwords. We’ve tried to explain them as we went along but probably poorly so let’s step back and review them with a quick Cloud Native Glossary.

Container Image - A package containing an application and all the dependencies required to run it down to the operating system level. Unlike a VM image, however, a container image doesn't include the kernel of the operating system. A container relies on the host to provide this.

Container - A running instance of a container image. Basically, a container image gets turned into a running container by a container engine (see below).

Containerize - The act of creating a container image for a particular application (effectively by encoding the commands to build or package that application).

Container Engine - A native user-space tool such as Docker Engine or rkt, which executes a container image thus turning it into a container. The engine starts the application and tells the local machine (host) what the application is allowed to see or do on the machine. These restrictions are actually enforced by the host's kernel. The engine also provides a standard interface for other tools to interact with the application.

Container Orchestrator - A tool that manages all of the containers running on a cluster. For example, an orchestrator will select which machine to execute a container on and then monitor that container for its lifetime. An orchestrator may also take care of routing and service discovery or delegate these tasks to other services. Example orchestrators include Kubernetes, DC/OS, Swarm and Nomad.

Cluster - the set of machines controlled by an orchestrator.

Replication - running multiple copies of the same container image.

Fault tolerance - a common orchestrator feature. In its simplest form fault tolerance is about noticing when any replicated instance of a particular containerised application fails and starting a replacement one within the cluster. More advanced examples of fault tolerance might include graceful degradation of service or circuit breakers. Orchestrators may provide this more advanced functionality or delegate it to other services.

Scheduler - a service that decides which machine to execute a container on. Many different strategies exist for making scheduling decisions. Orchestrators generally provide a default scheduler which can be replaced or enhanced if desired with a custom scheduler.

Bin packing - a common scheduling strategy, which is to place containerised applications in a cluster in such a way as to try to maximize the resource utilisation in the cluster.

Monolith - a large, multi-purpose application that may involve multiple processes and often (but not always) maintains internal state information that has to be saved when the application stops and reloaded when it restarts.

State - in the context of a Stateful Service, state is information about the current situation of an application that cannot safely be thrown away when the application stops. Internal state may be held in many forms including entries in databases or messages on queues. For safety, the state data needs to be ultimately maintained somewhere on disk or in another permanent storage form (i.e. somewhere relatively slow to write to).

Microservice - a small, independent, decoupled, single-purpose application that only communicates with other applications via defined interfaces.

Service Discovery - mechanism for finding out the endpoint (e.g. internal IP address) of a service within a system.

There's a lot we haven't covered here but hopefully these are the basics.

Read more about our work in The Cloud Native Attitude.

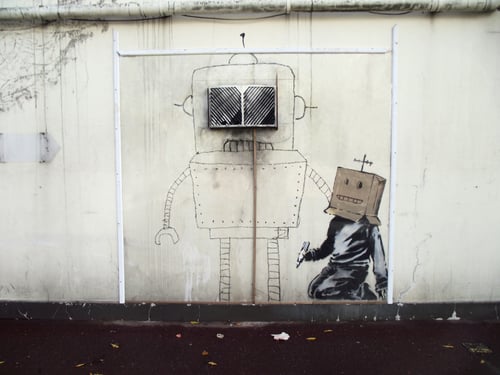

art: https://en.wikipedia.org/wiki/File:Banksy_Torquay_robot.JPG

Previous article

Previous article