We teamed up with Zoover to help them build a new production environment using Google’s hosted version of Kubernetes (GKE), with a Continuous Delivery pipeline based around CircleCI. In this blog, we’ll describe the system we built and explain the decisions we took. We’ll also have a look at workflow we settled on.

GKE

We picked GKE because it has all the features we need. It is easy to create new production-like environments and it supports zero-downtime updates. It also has some nice-to have features, including auto-scaling and network-level service discovery, which makes configuration much easier.

CircleCI

CircleCI integrates well with GKE and is used to build our Docker images. CircleCI uses a simple text file for configuration which makes versioning and rolling back easy.

Separation Between Production and Testing

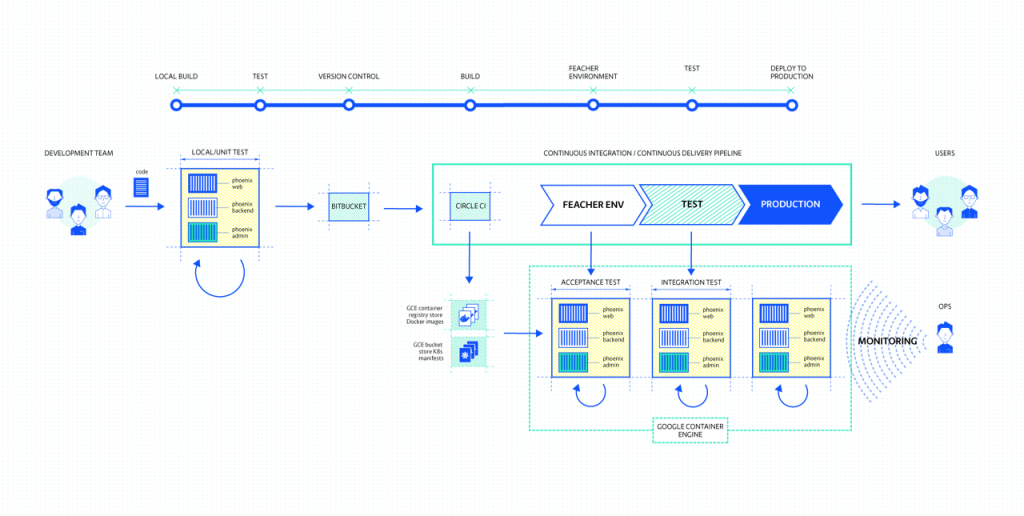

We have two distinct clusters, production and testing. We use Kubernetes namespaces to separate the test environments on our test cluster. See image 1.

.png?width=800&name=image03-1024x792-1%20(1).png)

Image 1 - Overview of Clusters.

Elastic Cloud

The application connects to Elastic Cloud, which is a hosted Elasticsearch solution. Because Elastic Cloud runs on AWS, we had a concern about latency. However, our tests showed that latency remained under 40ms between Google’s Cloud and AWS. Storing data in ElasticCloud relieved us of the hassle of managing our own ES setup.

Development Process

Docker makes it easy to move our application along the different stages of the Continuous Deployment pipeline. GKE, which supports auto-scaling using the Kubernetes Node Autoscaler feature, lets you automatically spin up and destroy nodes. This helps us to avoid over-provisioning of hardware, which keeps costs lower. So together, GKE, Docker and Kubernetes allowed us to create a pipeline that supported our development process. See image 2.

Image 2 - The pipeline.

Deployment to Production

Deployment to the production environment is done by a small script (deploy.sh) that deploys the specified version of the application to the specified environment. We do not rebuild the Docker images when deploying to production but rather we use the existing images, that were built and tested earlier in the process.This makes production deployments fully predictable.

Monitoring with Google Stackdriver

We used Google Stackdriver for monitoring and logging. Stackdriver combines monitoring and log aggregation features. It works out of the box with GKE. Additionally, Stackdriver aggregates recurring errors, giving fast and easy way to spot problems. For Zoover, who only have a small operations team, this was a particularly compelling feature. See image 3.

Image 3 - Screen shot of Stackdriver.

Conclusion

Zoover set out to build a truly cloud native system, one that was ‘continuously delivered’, ‘container packaged’, ‘microservice oriented’ and ‘dynamically managed’. In doing so, they were able to dramatically reduce their costs whilst removing friction from their development process. Using the latest technologies, practices and ‘cloud native’ continuous integration, Zoover were able to create one of the most elegant cloud native systems we ever worked on.

Image 4 - A happy team at Zoover.

Want to know more about monitoring in the cloud native era? Download our whitepaper below.

Previous article

Previous article